I’m trying to parse a json file. I have written a really ugly expression which parses it correctly. And I have extractor-based solution which silently parses to an empty list.

I found the extractor-based approach in a discussion on stack overflow which at first looked straighforward until I actually tried to use it on a real example.

I have several issues.

-

I’m importing

import scala.util.parsing.json.JSONwhich works for me, but when I try to copy the code to scastie I get an error thatobject parsing is not a member of package util. Should I avoid usingscala.util.parsing.json? -

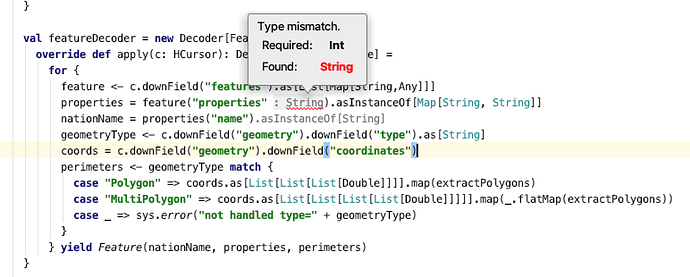

The ugly version of my code filled with lots of

.asInstanceOf[List[List[List[Double]]]]works. But the version usingforcomprehension silently fails. Unfortunately inserting_ = println(this and thatdoes nothing. Is this related to this previous issue where theforcomprehension is not optimized for debugging? How can I convince theprintlnto actually print the value rather than being lazily ignored? -

Using the style of JSON parsing suggested by shahjapan, how can I iterate two different ways depending on a condition? e.g., depending on the value of

geometryTypeI need to interpret the type ofcoordinatesdifferently, so I still fill out the for comprehension with lots of.asInstanceOf[thisandthat]

val try1 = for {

// L(features) <- geo.asInstanceOf[Map[String,Any]]("features")

M(countryFeature) <- features.asInstanceOf[List[Any]]

_ = println(countryFeature)

S(countryName) = countryFeature("name")

M(geometry) = countryFeature("geometry")

S(geometryType) = geometry("type")

L(coordinates) = geometry("coordinates")

perimeters <- geometryType match {

case "Polygon" => extractPolygons(coordinates.asInstanceOf[List[List[List[Double]]]])

case "MultiPolygon" => coordinates.asInstanceOf[List[List[List[List[Double]]]]].flatMap(extractPolygons)

case _ => sys.error("not handled type="+geometry("type"))

}

} yield (countryName,perimeters)